When ChatGPT debuted, Generative Artificial Intelligence (GAI) appeared to change the world overnight. Writing a blog post, editing photography, and creating architectural renderings all could be done with a few simple prompts. Microsoft GitHub Copilot even offers to write code for users, and 1.5 million programmers already rely on its OpenAI integration to create original code, fix errors, and even translate programming languages.

However, we are still in the early days of engineering the AI technology stack. So much so, that GAI is still uneconomical for even the largest service providers. Frequent Copilot users cost Microsoft as much as $80 each month in resources. AI Inference requires an enormous amount of computational power.

Every time a user enters a new query, it costs AI providers compute time to answer that question. As engineers continue to master latency and deliver instant response times on queries, even more strain will be added to the system as usage grows. Unless providers can solve the commercial viability riddle, the incredible potential of AI will never be achieved.

d-Matrix is well positioned to unleash this potential with an architecture-changing approach that dramatically reduces Total Cost of Ownership (TCO) for generative AI. Why Playground led the company’s $44 million Series A has been well documented. Our venture team knew as far back as 2020 that d-Matrix’s datacenter technology would make GAI economical in ways that developers could only dream of at the time.

***

As early stage investors we break down the different layers of the technology stack in certain domains and develop investment theses at each level.

In AI, we invested in hardware companies like d-Matrix, Next Silicon, and Ayar Labs, infrastructure software like MosaicML (recently acquired by Databricks), and vertical solution companies including Pandion, Ultima Genomics, and Atomic AI. In quantum, we also start with the hardware layer, backing leaders like PsiQuantum, and building a software play on top of that with companies such as Phasecraft.

What continues to excite us about d-Matrix is their potential to flip the cost equation of AI by offering best-in-class back-end hardware at a dramatically improved TCO. Their chips will drive the cost curve down and allow AI service providers to deploy inference at a lightning fast pace. The experience will be as seamless as a Google search for directions to dinner or looking up the name of a new doctor. With d-Matrix, AI goes from enormously promising to commercially viable.

The proof points for the company are stacking up:

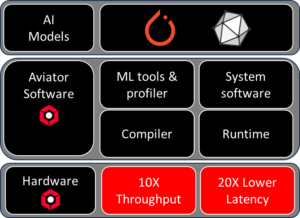

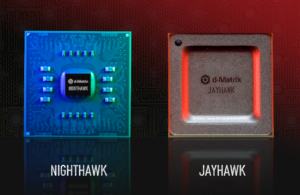

- Taped out proof-of-concept chips, d-Matrix has successfully de-risked the hardware they are building and validated the chiplet, interconnect, and memory computing technology. Their work is far from just an idea buried in a pitch deck; it’s long been a real taped out product, with customers ready for delivery.

- Traction with customers. d-Matrix already boasts multiple enterprise-grade customers, with volume orders in hand. These customers know Nvidia, Qualcomm, Intel, and AMD’s products well, and still recognize the value of the d-Matrix solution.

- Enormous strides in software. d-Matrix has been a software-first semiconductor company since day zero. Software is the interface customers interact with, meaning a chip is nothing without the customer-facing delivery, and a full software stack that can integrate with the most powerful tools developers already use. d-Matrix has already been making software drops to customers for revenue which shows the emphasis the team has placed on software.

- Strong executive and engineering teams. Playground helped d-Matrix put in place world-class leaders and a stellar team of engineers to deliver on the company’s vision.

- Closed Series B Funding. In September 2023, the company announced $110 million in funding a staggering up-round at a time when capital remained tight. This is a particularly impressive feat in the semiconductor industry where Nvidia, AMD, and Qualcomm often crowd out new entrants under the best of circumstances.

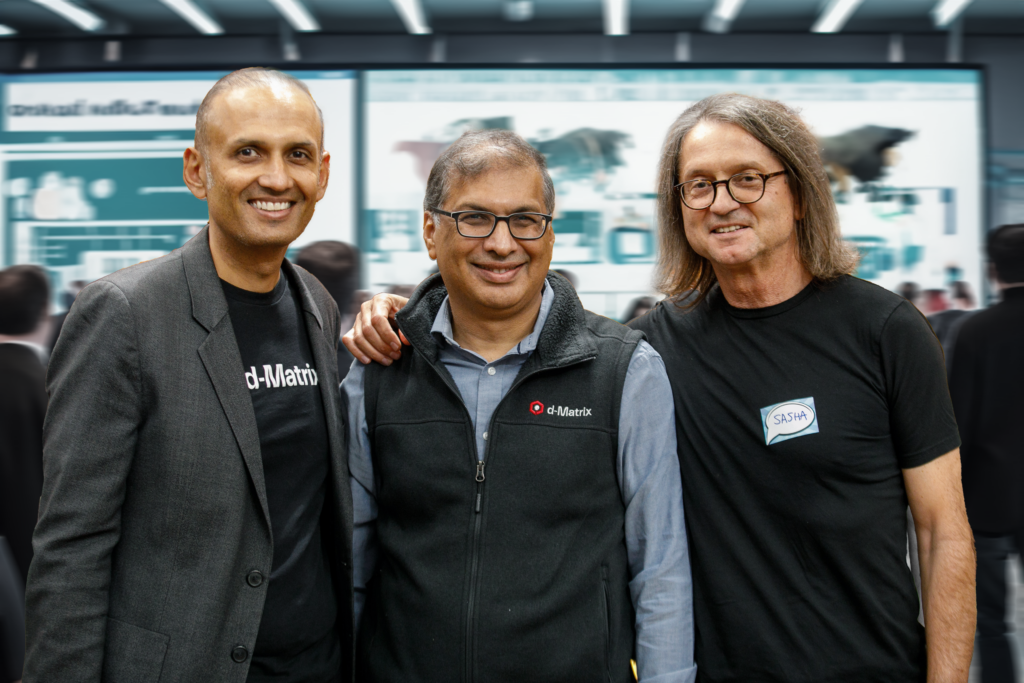

Playground has served as a partner throughout d-Matrix’s existence. Our team has helped source technical talent, advised on the organizational design and how to scale, and helped develop PR, analyst relations, and a brand strategy. Playground Venture Partner Sasha Ostojic has even run quarterly software reviews with the d-Matrix team for the last three years.

“Sasha and the broader Playground team have been exceptional partners for d-Matrix. We couldn’t be more grateful for Playground’s support,” Sid Sheth, Founder and CEO of d-Matrix said. “They are there for us when we need them and have been additive across fundraising, talent acquisition, strategy and operations. We look forward to their continued support on our journey together, achieving our shared vision of making GAI commercially viable for all.”

***

Sasha, who focuses on AI, next-gen compute, robotics and automation at Playground, has played a long-time role with d-Matrix. In March 2020, Sasha was introduced to Sid Sheth and Sudeep Bhoja, the co-founders of d-Matrix, over Zoom. The three began holding regular meetings covering everything from chip development to software. d-Matrix’s initial team had already successfully taped out over 40 chips across their careers and brought with them a strong software-first philosophy, which intrigued Sasha. Soon, d-Matrix asked him to come on board, and Sasha brought in Playground soon after.

“If there’s anything I learned in my 10 years at Nvidia, it’s the fact that software is what differentiates semiconductor companies,” Sasha Ostojic, a Venture Partner at Playground and board member at d-Matrix, explained. “Sid and Sudeep understood that and incorporated it within their vision.”

As d-Matrix began considering a Series A, Sasha and Sid realized that they lived less than a mile away from one another. The two neighbors began meeting at the Los Altos Grill every other Sunday to discuss the company and wrestle with when to raise. At these “date nights,” as the two of them jokingly call the meet-ups, Sasha helped formulate the strategy to capture the data center inference market and even decide when to raise. These weekly meet-ups began when the company was about 10 people, and they continue to this day.

***

Any semiconductor venture worth its salt needs a roadmap. Some companies will recognize a gap in the market, deliver an exceptional chip, and then have no follow-on ideas. The market eventually will catch up with every breakthrough — either replicating the insight or leapfrogging the value it provides. Companies that grow to the size of a Nvidia never stop innovating.

The d-Matrix team has a plan for multiple generations of chips and the incremental improvements each one will bring to the market. Between test chips and product silicon, they have delivered a tape-out every year since inception with the aim to launch a new product every two years. Customers are lining up for the second generation but one of the company’s challenges will be go-to-market development.

We at Playground look forward to supporting them on their journey. With a Series B in hand, d-Matrix will continue to push towards making generative AI commercially viable and every generative AI query, as simple, cost-effective, and scalable as a Google search.